The war between the United States, Israel, and Iran is not being fought only with missiles, drones, and military strikes. It is also being fought online, where false images can spread across the world in minutes and shape how people understand the conflict. One of the clearest recent examples involved a fake satellite image that appeared to show a destroyed US base in Qatar.

The image seemed, at first, authentic. An Iranian news source posted it and came as the visual evidence that US radar equipment in the base had been destroyed to the ground. However, it was discovered that the image was fictional by the researchers later. It was made or edited through the use of artificial intelligence, so an older satellite photo can be a fake post-war photo.

The case emphasizes an increased threat in the contemporary war. Generative artificial intelligence is once again allowing state-controlled media sources, propagators, and also networks of online disinformation to churn out believable-looking fake images that deceive millions of individuals. That can be devastating in times of war.

A Fake Image That Looked Real

State-oriented English language newspaper, Tehran Times, had posted the false image on X. It depicted what was made out to look like a before-and-after comparison of a US military base, with the second image seemingly depicting a location ravaged following an assault.

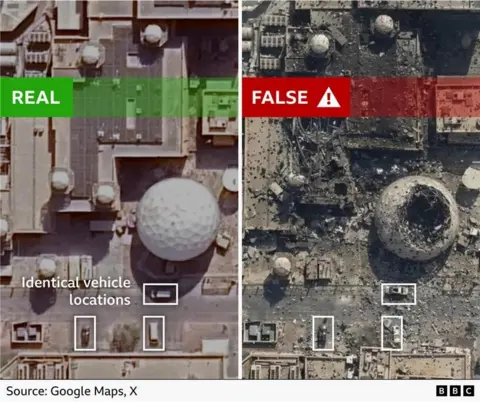

The image was subsequently declared by researchers ashavingg no evidence of a strike in Qatar whatsoever. Rather, it was an AI-edited variant of a Google Earth shot of one of the bases in the US in Bahrain last year. That is, the picture did not depict recent damage,ge and neither did it depict the location being staked out.

The type of manipulation is particularly risky since image data captured by satellites can be accompanied by a certain air of expertise. A great number of individuals believe that such images are objective, technical, cal and credible. When a fake image replicates that kind of visual image, it can easily be believable.

The deception can merely be brought to light by minor details. Here, the researchers identified a row of cars that had been in the same locations in the actual image and in the modified image. The same outline was used two times, implying that the second picture was constructed on the first one and not taken following a real assault.

Why These Images Spread So Easily

The fake image went viral despite being a fake image and was reportedly seen by millions of people on various social media platforms in various languages. That in itself demonstrates the extent to which wartime content can so easily traverse boundaries and frontiers as well as cross audiences.

Part of the reason is speed. Social media favors visual theatrics, particularly when it comes to crisis times. Users are emotional, and post first and check later, or never check. When it seems to be a technical or an official picture, most people think that it must be true.

The other reason is the fact that AI-generated visuals are becoming more difficult to identify. The fake images before were usually flawed. Nowadays, many of them are smooth enough to look at in a glance, particularly on a phone screen or within a trendy news privacy feed. It complicates the ability of normal users to distinguish between fact and fiction.

Researchers Warn of a Growing Pattern

According to the manipulated satellite imagery, Brady Africk, an open-source intelligence researcher, stated that there had been more instances of the use of fake satellite images on social media following major world events,s such as the current one in the Middle East. In his opinion, the majority of the fake pictures have recognizable indicators of malfunctioning AI production, including weird angles, blurred images, and fake features that are not reflective of reality.

In other instances, that betrayal is not totally generated. Someone can use an actual satellite image and insert evidence of devastation, smoke, or broken buildings that did not exist in the first place. Such a process might be as efficient since it begins with a realistic starting image.

The Fog of War Makes Verification Harder

Another deceptive satellite image that was going around during the war was cited by information warfare analyst Tal Hagin. According to that image, the Israeli and US jets had hit only the painted aircraft silhouette on the ground in Iran, and the actual aircraft had been relocated.

The photograph was realistic enough to go viral on Instagram, Threads, and X. However, on a deeper look, there were other threatening details, such as meaningless coordinates, incorporated into the picture. In addition to the SynthID watermark, which is an explicit marker that is used to identify images made with the help of Google AI tools, AFP also exposed an invisible marker.

These illustrations demonstrate that the mix-up of wartime opens up optimum opportunities where false images can flourish. During conflict situations, information is usually constrained. Due to political motives, governments might conceal, delay information, or frame narratives. That leaves vacuums that allow open-source investigators and journalists to access the public tools, such as satellite imagery, to know what is going on.

A Problem Seen in Other Conflicts Too

False or machine-learned satellite images are not confined to the war between the US and Iran at the moment. The same issues were reported in the war in Russia over Ukraine and the four-day war between Pakistan and India last year. That implies that this is no longer a separate strategy. It is playing out in the common disinformation game of war.

When these tools become more convenient, any number of actors can generate low-cost and high-speed, objectionable images. One fake photograph might be spreading more quickly than a correction, particularly when it affirms what individuals might already wish to hold. When it happens, the debunking process can hardly be essential to reverse the initial influence.

Real-World Consequences Beyond Social Media

Analysts have also opined that falsely altered satellite images can not only mislead people on the internet. It may really impact the general opinion, the political pressure, and even the financial markets.

According to Africk, false imagery could shape the thoughts of individuals concerning critical matters, such as whether a nation ought to engage or to intensify a war. In a stressful environment, a misleading picture of a destroyed base, or a successful strike can get emotional responses and solid attitudes before the reality sets in.